Design and Development of a Hand Gesture Controlled Soft Robot Manipulator

WHO (World Health Organization) Ebola Road Map statistics (Published in 28th August 2014) reflects our current capacity of safely handling an unknown epidemic situation. It shows that we should still work on developing much sophisticated technologies to reduce death counts in epidemic situations. One attempt of reducing the deaths of medical sector workers will be the Design and Development of a Hand Gesture Controlled Soft Robot Manipulator. This will be helping the medical sector workers to gain access in to an unfamiliar situation remotely and handle it carefully using their hand gestures.

The project is introduced in this section together with an explanation of our currently used technology and what we are going to develop, along with our project’s main objectives. The design section illustrates the overall design of the project and its mechanism. Fabrication section elaborates how the system is fabricated including the 3D printing of the mold, fabricating of elastomer based gripping mechanism and development of the gesture controlling with the robot manipulator. Testing section shows the output of the project and discusses about the drawbacks and future improvements for this project as an ongoing research.

Background

Introduction

Robotics, Artificial Intelligence and Automation have become the backbone of the modern development, and researchers are more willingly biased on solving real-world problems. Among them Robotics is one of the famous areas of development and today more industrial and domestic applications are readily available. There are so many types of fixed and mobile robots. There are some robots which have been developed in the medical sector for various purposes. Design and development of a hand gesture controlled soft robot manipulator for medical applications is a novel methodology of developing a medical robot which is capable of gesture controlled soft gripping. The main concentration of this project is the robotics in the medical industry. There are few types of robots which are already developed in the medical industry for various purposes. Through this project a novel methodology of developing a medical robot will be addressed. The role of the development will simultaneously address the increasing safety in collaboration with motivating health sector workers against a mortal viral flue like Ebola, in a plague environment. Lowering of disinfection cost, increased labor capacity and tele-presence would be added benefits that could be generated from this application.

The role of technology in today's world

One of the main disadvantages in Robotics applications is the requirement of expertise to operate the robot. This project will be addressed on eliminating such factors to increase the efficiency and applicability of robots in the medical industry. Among many available types of robots, bilateral systems are widely applicable and famous. There are so many master designs that are readily available to support bilateral systems. Among them, the Joystick (Remote Control), Electromyography, Voice Control, Haptic (Mechanical and Touch Sensitive), Gesture (Hand Gesture, Body Gesture and Eye Gaze) and BCI (Brain Control Interface) stand out prominently.

A research study exists to illustrate the competitiveness of available master designs for assessing the applicability in bilateral system design for an epidemic environment. A literature survey of International research publications for bilateral medical systems for the last few decades was performed to reveal the applicability, controllability (in operation), complexity (of the device), response, reliability and safety of the available master designs. Remote Controlling and Electromyography based technologies are found to be the oldest and established techniques and Haptic is in the middle stage. These conventional systems consist their own disadvantages and control issues. To overcome those issues with the emerging of vision attentive systems the gesture based designs were invented and today it has become widely applicable and well known in research. Also BCI and Voice Control based techniques are in research level and rapidly developing. The assessment of these systems is performed towards a designing of a bilateral system for an epidemic environment which is cost effective, reliable, repetitive and operator skill independent. It is found with the present technology, Gesture based controlling systems are more likely to satisfy the design requirements than other conventional and modern systems and will be widely applicable within next few decades by overcoming its minor issues.

Contributions to the new technology

This research study provides the design techniques, methodologies to integrate the master, slave and communication between two sides and also the development of the soft robotic. The most important features in such a system are as follows. Convenient HMI (Human Machine Interface) in master side, reduced time delay in Tele-presence and goal oriented design of slave side. Soft robot provides opportunity to bridge the gap between machines and people.

Comparing to hard-bodied robots, soft robots have bodies made out of truly soft and extensible materials such as elastomers which can be deformed and absorb much of the energy. This type of materials silicon and rubber /elastomers have facilities of sensing, power storage, actuation and computation, therefore algorithms can be derived in order for the soft robot to deliver the desired behaviour because it allows a major advancement in the degree of it’s freedom.

This project mainly pertains to design and fabrication of the soft robot , and intergration of it with the manipulator type robot arm with a soft robot gripper mechanism controlled by Hand Gestures

Objectives

- Introduce an application of the development of a Hand Gesture Controlled Soft Robot Manipulator.

- Design & Development of a Soft Robotics actuating mechanism.

- Design & Development of a Pneumatics Controlling mechanism.

- Design & Development of a Hand Gesture imitating mechanism for the robot manipulator and the Soft gripping mechanism.

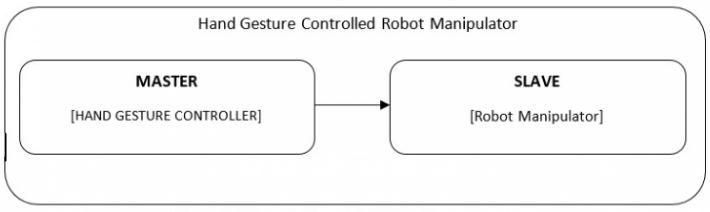

Design

The design of the Hand gesture Controlled Soft Robot Manipulator is a bilateral system and the main design comes as master side design, slave side design and communication in between as shown in the above figure.

Mainly the master side is the Hand Gesture Controlling Mechanism and the slave side is the Robot Manipulator Mechanism with a Soft Gripper. So the designing was performed individually for the two systems and then finally both systems was integrated as one design.

Overall Design

Robot Manipulator & Fingers

Robot Manipulator

Five (5) Degrees of Freedom robot manipulator has been used for the development.

Finger (Elastromer based actuator)

This actuator consists of two main parts, the main body and the base. Eleven (11) chambers were connected in series for the main body. In this main body, the Chamber separation wall was thinner than the other walls in order to bend without expanding. The base was a rectangular plate. Solidworks CAD software was used to design the mold of the Gripping actuators and to export .stl files for 3D printing.

The figure shows the Robot Manipulator & the Elastromer based gripping actuators.

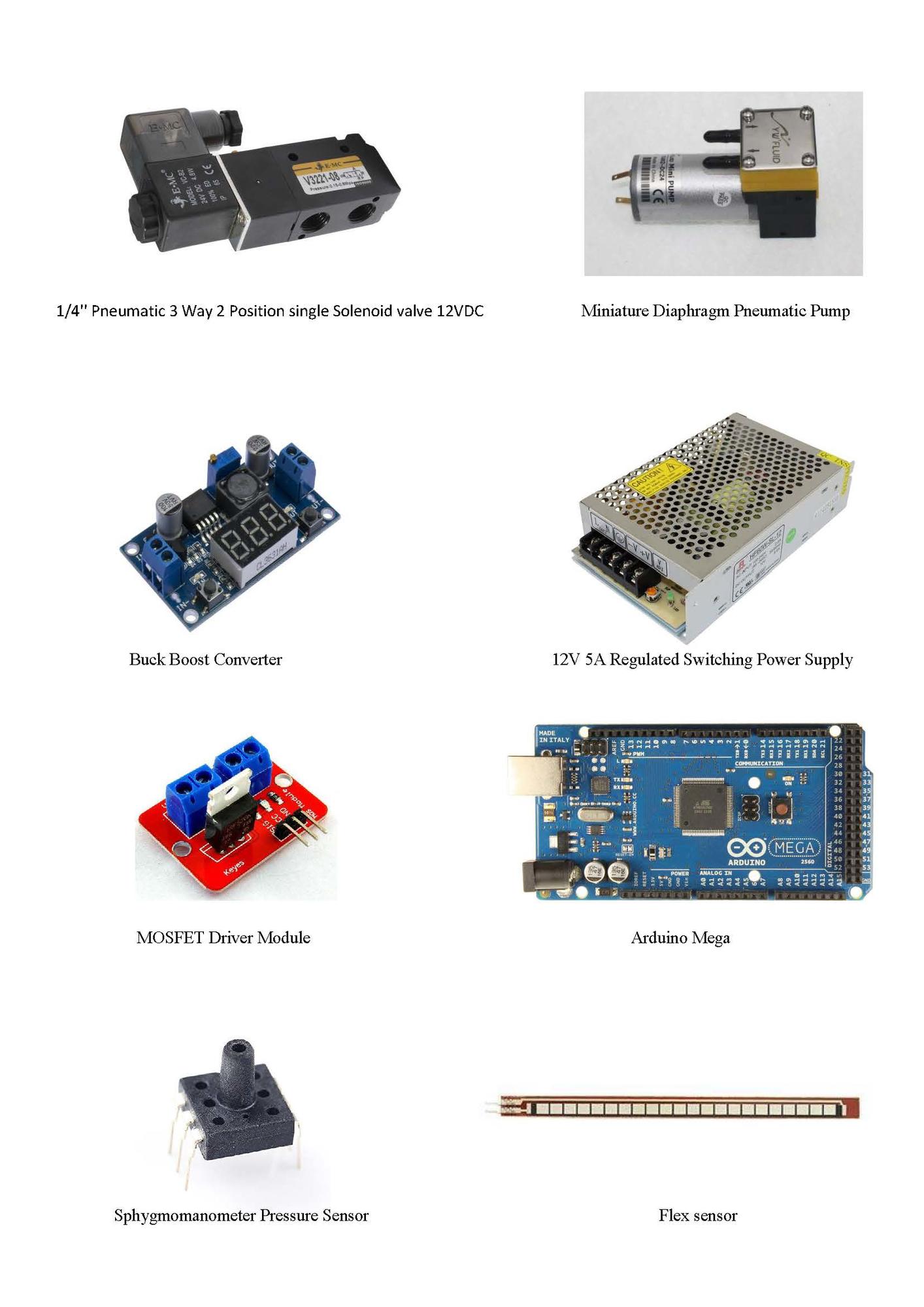

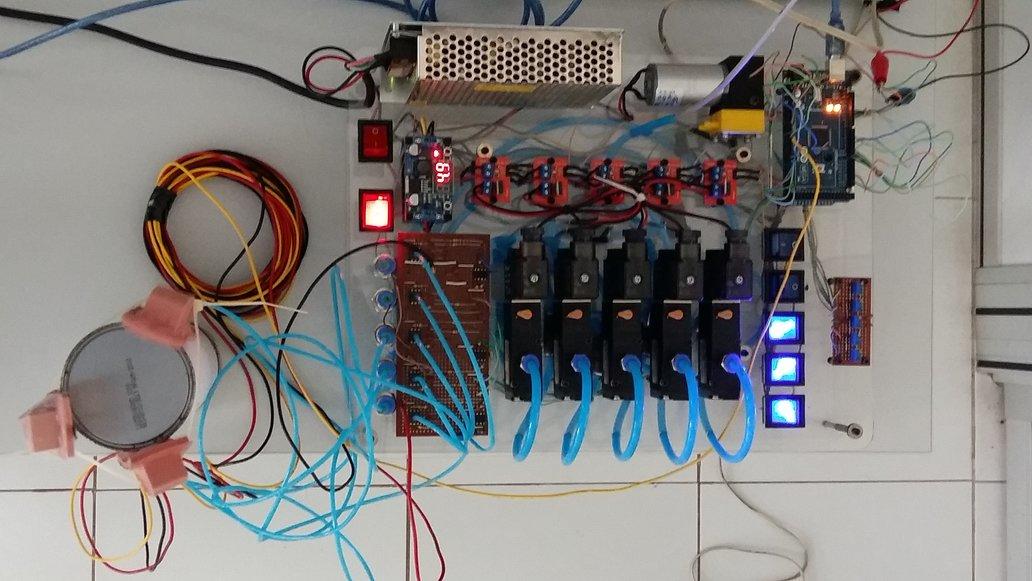

Control Board & Connection

Control board for the Soft Gripping Fingers were made with the list of items mentioned in Table 01. A common 12V 5A regulated switching power supply module was used to power up the whole control board. 1/4'' Pneumatic 3 Way 2 Position spring return solenoid valve 12VDC were used to control air flow in to the actuator and in the same time miniaturized diaphragm pneumatic pump which provides 50psi of air pressure was used to generate air supply continuously. Arduino Mega is connected to interface the control board with Processing IDE which connects the Leap Motion Controller and other control algorithm. Buck converter which converts 12VDC into 5VDC with MOSFET driver modules were used to control solenoid valves using 5VDC output of Arduino mega. Sphygmomanometer pressure sensor with differential amplifier circuit, to read input air pressure to Arduino mega and also a Flex sensor, to detect the bending of the actuator, were used.

Table 01 shows the list of items need to develop 5 solenoid control board.

Table 01: List of items for the Control Board

| Item with description |

| |

1 | 1/4'' Pneumatic 3 Way 2 Position single Solenoid valve 12VDC | 5 | |

2 | 1/4'' NPT male to Tube OD 6mm Straight Connector Push In Fitting | 10 | |

3 | Tube OD 6mm Union cross Push In Fitting | 1 | |

4 | Tube OD 6mm Union Y Push In Fitting | 7 | |

5 | Tube OD 6mm Pneumatic Bulkhead Union Push In Fitting | 5 | |

6 | OD 6mm Pneumatic Tube | 6m | |

7 | Miniature Diaphragm Pneumatic Pump | 1 | |

8 | MOSFET Driver Module | 5 | |

9 | Buck Boost Converter | 1 | |

10 | Sphygmomanometer Pressure Sensor | 5 | |

11 | Rocker toggle switch x 8 (Red 2, blue 5) | 7 | |

12 | Potentiometer | 5 | |

13 | 12V 5A Regulated Switching Power Supply | 1 | |

14 | Arduino Mega | 1 | |

15 | Flex sensor | 1 | |

16 | Cast Acrylic sheet | - | |

17 | Heat-Shrinkable tubing ,Jumper wires, lugs, Cable ties, Hot Glue, Screws, nuts, etc | - |

Hand Gesture Controlling

Hand gesture controller design was based on stereo vision technology. Stereo vision, is meant to be the extraction of 3D information from the digital images. It is an artificial stereopsis which is followed by two cameras to obtain depth and 3D structural information.

Literature survey reveals 3D data acquisition, vastly increases with the potential industrial applications within the last few years. Accuracy and the robustness of 3D sensors were increased while the product itself, became inexpensive. Mainly 3D sensors were used for object tracking, motion analysis, 3D scene reconstruction and gesture based user interfaces in literature.

The main reasons for choosing Leap Motion Controller as the 3D sensor for hand gesture detection are as follows.

• Low Cost. (With respect to other available 3D sensor modules this one is very cheap).

• The range of the sensor (1m) fits for the application.

• The sensor module is specially designed for the hand gesture tracking.

• Free SDK (Software Development Kit) and Libraries are available.

• Availability of the free support. (Blogs, forums and free testing software and applications).

• MATLAB compatibility. (Mat-leap integration between MATLAB and Leap is possible).

• Application novelty for manipulator robot integration.

• Accuracy fits for the application.

• Lightweight, portability and plug and play device.

The design orientation of both hardware and software of the Leap Motion, is concentrated to the hand gesture application development. Also the above mentioned features are readily available with the system and are causes for the selection of this device.

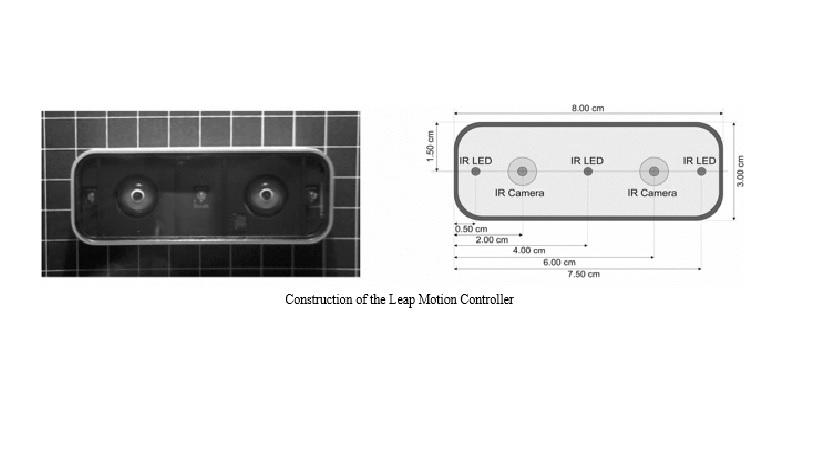

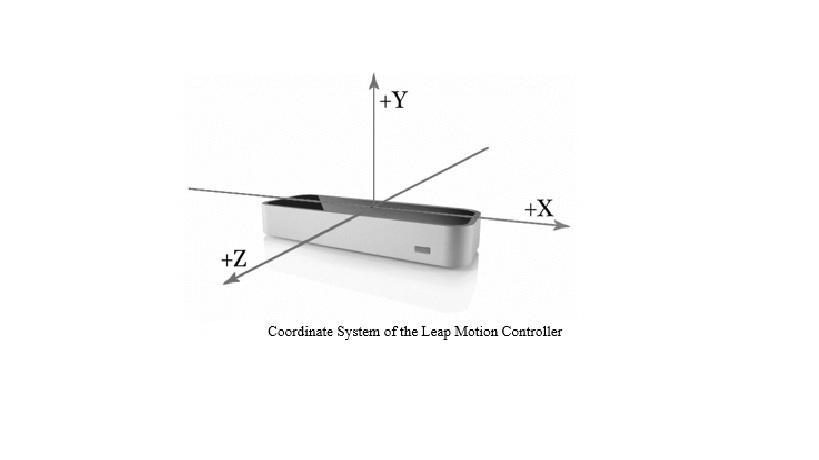

Leap Motion Controller is a conjunction with its API (Application Programming Interface) drive positions in Cartesian space with predefined objects like finger tips, pen tips etc. This is designed as a USB (Universal Serial Bus) peripheral device. Two monochromatic IR cameras and three infrared LEDs (Light Emitting Diodes) are assembled as shown in Figure. The LEDs generate a 3D pattern of dots of IR light and cameras are assembled in such a fashion to generate 300 frames per second of reflected data. Then the data will be sent to the V2 Tracking software and 2D frames generated by two cameras will be further analyzed to generate 3D positioning data.

As shown in the above figure Leap Motion Controller consists of a right-handed Cartesian coordinate system. The origin lies on the top of the Leap Motion Controller. X and Z axes lies in the horizontal plane and Y axis is vertical. Following Table shows the measurement details of the Leap Motion Controller. The measurements can vary according to the external conditions.

Measurements of Leap Motion Controller

Parameter | Measurement |

Distance | Millimeters |

Time | Microseconds |

Speed | Millimeters/second |

Angle | Radians |

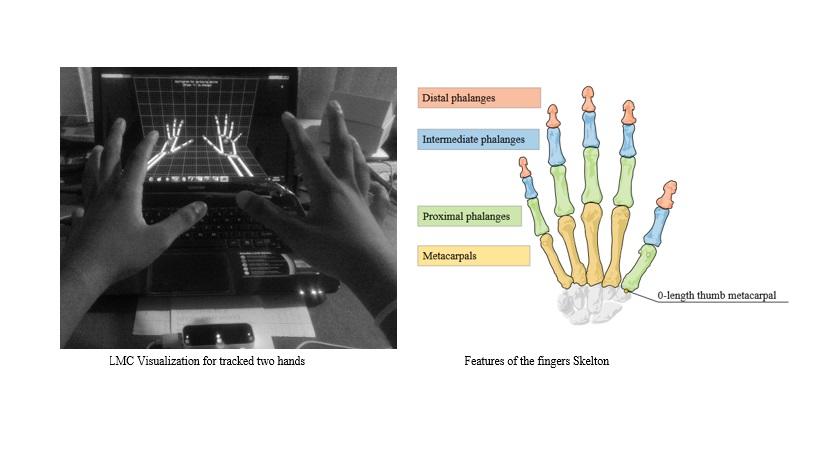

Bellow Figure shows the Leap Motion Controller visualization and the generated skeleton for two hands. Also it shows the bones of the fingers that could be tracked and Metacarpal – the bone inside the hand connecting the finger to the wrist (except the thumb), Proximal Phalanx – the bone at the base of the finger, connected to the palm, Intermediate Phalanx – the middle bone of the finger, between the tip and the base and Distal Phalanx – the terminal bone at the end of the finger can be identified and tracked. Apart from the tip detection, this sensor can be programmed to detect the gestures, motions and tools.

Fabrication

Soft Robotics Gripping fingers have been fabricated using Elastosil M4601 2-part silicone rubber with 1:9 composition and using 3D printed molds with due temperature conditions. Also the pneumatic control board has been constructed to control the shape of the soft robotics fingers by using flex sensor input. The Hand Gesture Control has been integrated and the fabricated procedure with relevant control variables and parameters will be discussed in the sub sections.

Soft Fingers

- Initially, Elastosil A and B in 9:1 ratio. 90g from part A (white) and 10g from part B (Red) were measured and poured into the mixing cup.

- Then the mixing cup was put into the centrifugal mixer and the mixing process was run until both materials mixed uniformly.

- The 2 parts of main mold were hot glued and the mixture was poured into the main mold.

- Then the mixture was poured into the base mold until half of its depth got filled with the mixture.

- After that the molds were placed in a vacuum chamber and the valve was slowly turned on.

- After the popping up of bubbles, the valve was slowly released and air was let back to the chamber.

- The bubbles were popped, using a barbecue stick that had not been drawn into the surface.

- Before putting the mold into the oven excess Elastosil was wiped off from the main mold and piece of paper was added on top of the Elastosil of the base mold.

- Later the both molds were placed in the pre-heated oven at 65°C for 15 minutes.

- While this procedure was repeated, the level of Elastosil in the chamber mold dropped at certain intervals. When this happened, extra Elastosil was poured and it was returned to the oven for another 15min for cure.

- Then both molds were removed from the oven and the top part of the actuator was removed from the mold. Then a fresh mixture of Elastosil was made, of 10g (9g of part A + 1g of part B) and a small amount of mixture was poured into the edge of the base mold. Then the top part of the actuator was placed on the base mold and edges were sealed using the extra Elastosil mixture. After that, the joint part was placed in the oven again for 15 minutes.

- Finally, the cured actuator was removed from the oven and the actuator was removed from the base molds.

Control Board

Fabrication of cast acrylic parts

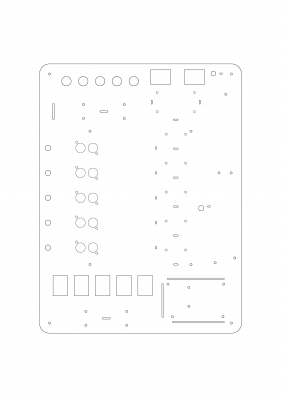

300mm x 400mm x 4mm cast acrylic sheet has been used for top and base plate for the control board. Following figures shows the AutoCAD drawing of both top and base plates and this AutoCAD drawing was used to laser cutting process.

Main parts of the control board

The below figures show the main parts which were used to develop the pneumatic control board.

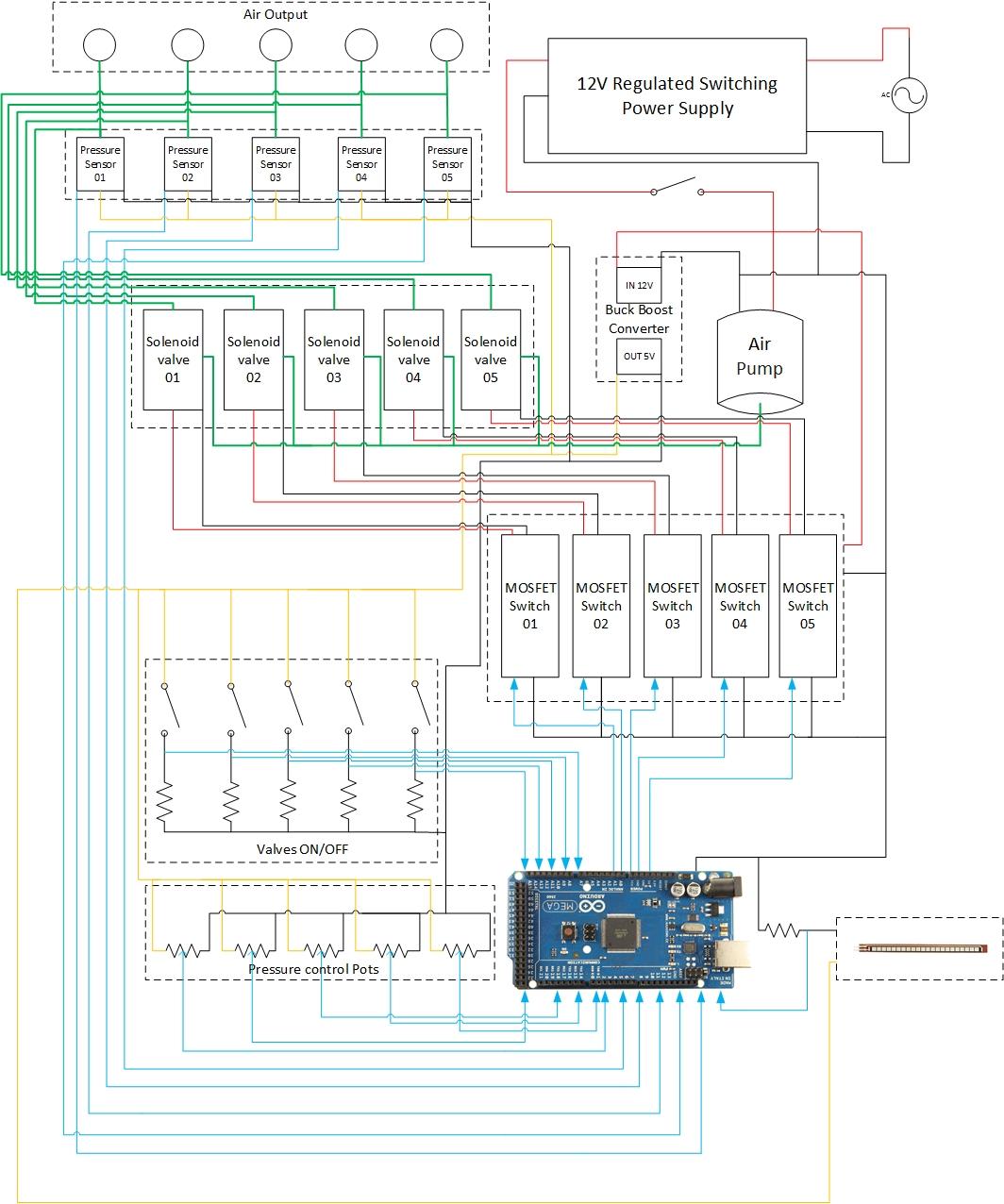

Wiring diagram of control board

Wiring diagram of control board

Wiring specifications of the control board

Wiring Colour | Type of signal |

Red | 12vdc |

Orange | 5vdc |

Black | 0vdc |

Blue | 0-5vdc (logic) |

Green | Pneumatic |

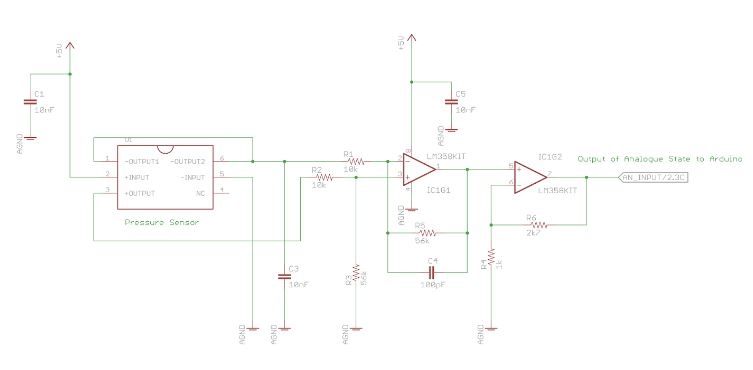

Circuit diagram of pressure sensor and differential amplifier

Following figure shows circuit diagram of Sphygmomanometer pressure sensor and differential amplifier’s connections. Pressure sensor has the capability of measuring a range of pressure values. It was driven by 5 VDC and 0-25 mV was the output signal. Therefore a differential amplifier circuit was used to amplify 0-25mV up to 200 mV to 3.5 VDC swing because the strength of the output signal was not sufficient to interface it with an Aruduino.

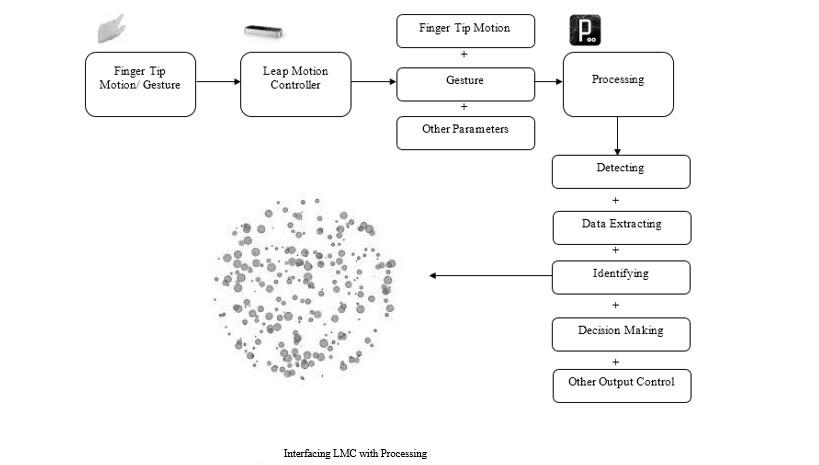

Hand Gesture Integration

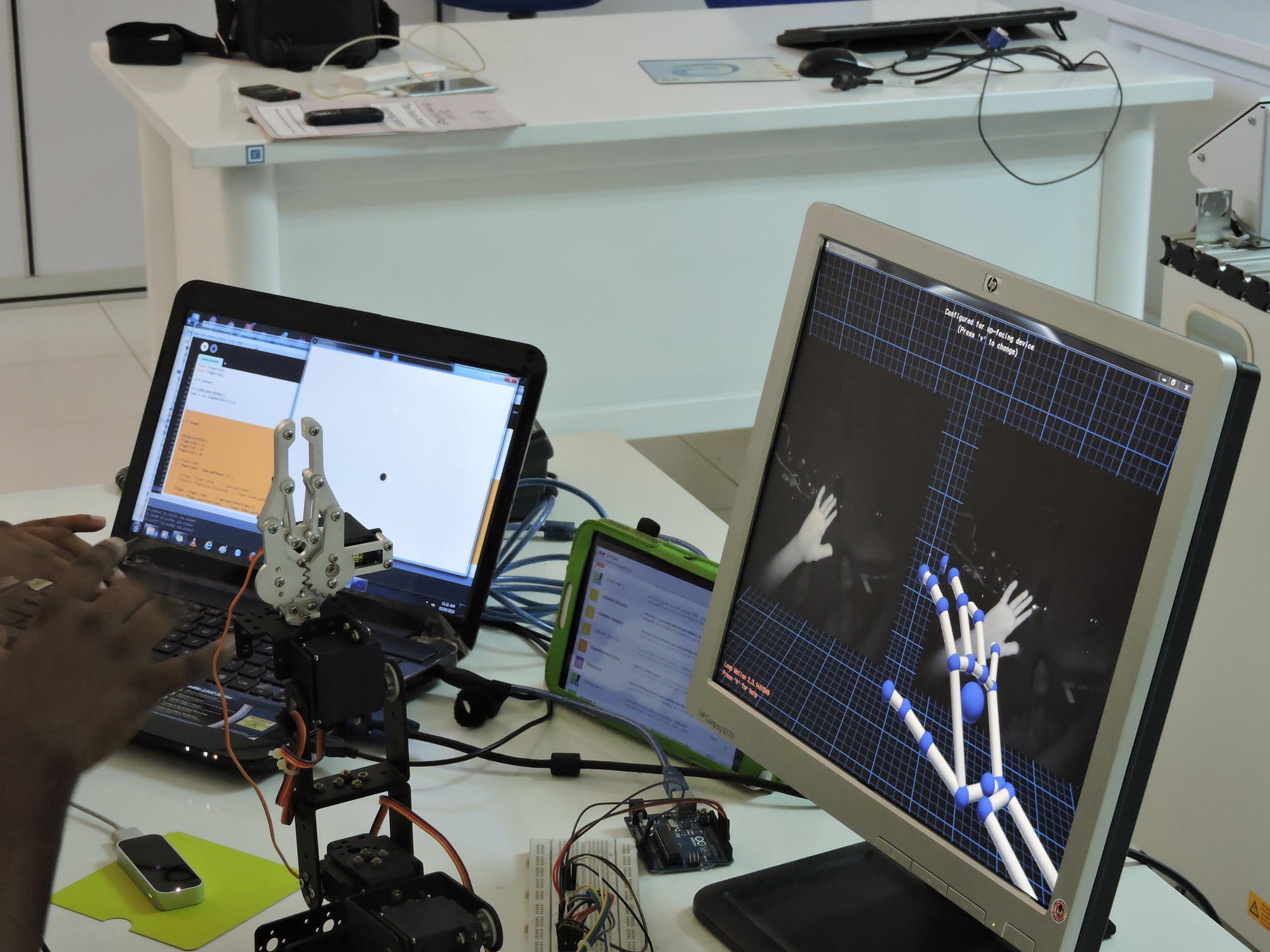

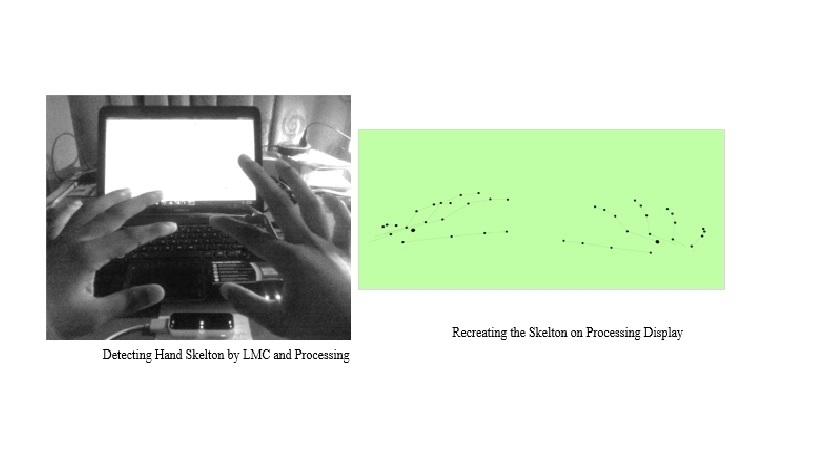

As shown in the above figure hands (includes palm and fingers) were detected by the Leap Motion Controller and data was sent to the Processing software. The processing software is capable of detecting, extracting data, identifying, decision making and other output controlling. This depends on the generated program. Once control decisions are made according to the application requirements, control algorithms, processing can instruct controller or other program to what should be done.

Above figure (right) shows the development of tracking two hands using processing. The skeleton of two hands were generated and shown in the above figure (left). The main design requirement of the application is to detect the fingertip and to extract the position, orientation and velocity data from it.

Therefore it is developed to track the middle, index and the thumb fingers. The tracked fingertips and wrist was recreated in the Processing framework as square objects and a circular object. The index finger was used to extract position, orientation and velocity data for the robot.

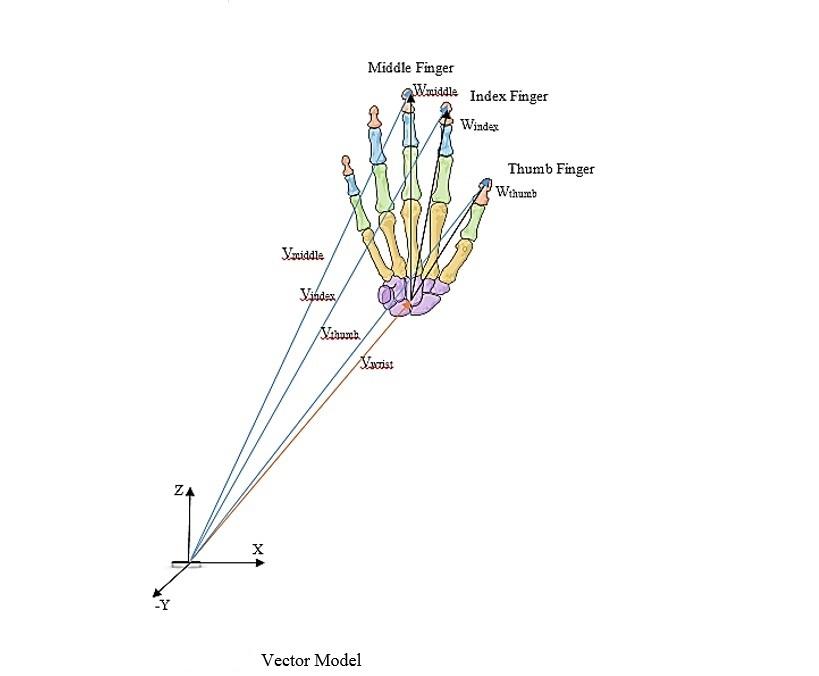

Then four vectors were drawn from the Leap Motion Controller coordinate frame to each fingertip and to the wrist. Then using the following relationship, a relationship to wrist to, fingertip distance was developed. The equation for the middle finger is as follows,

Vwrist + Wmiddle = Vmiddle

Wmiddle = Vmiddle - Vwrist

The same equation, was repeated for each finger and then took the average value as follows,

K = | Wmiddle |+| Windex |+| Wthumb | / 3

By that K parameter was generated, that will use to signal the solenoid valve. To control smoothly, the feedback was taken from the flex sensor attached to the soft finger and then was controlled according to the following pseudo code.

P = Flex Sensor

K is Initial Value

P is initial Value

P is Serial Analog Value Read

If P is Smaller Than or Equal K

Serial Digital Write High

Else

Serial Digital Write Low

Testing

This work demonstrates elastomeric foams as a new material platform for designing soft robot gripper. These foams provide the unique capability to produce truly 3D actuating structures. The soft robot is actuated by compressed air coming from the air tank running through the miniature diaphragm pneumatic pump and electro-pneumatic solenoid valves. The electro-pneumatic solenoid valves are controlled by the MOSFET driver module via Arduino board using USB serial communication. Experimental results are showed in below sub topics and final demonstration videos.

Soft Robot Fingers

This work demonstrates elastomeric foams as a new material platform for designing soft robot gripper. These foams provide the unique capability to produce truly 3D actuating structures. The soft robot is actuated by compressed air coming from the air tank running through the miniature diaphragm pneumatic pump and electro-pneumatic solenoid valves. The electro-pneumatic solenoid valves are controlled by the MOSFET driver module via Arduino board using USB serial communication. Experimental results are showed in below sub topics and final demonstration videos.

Soft Robot Fingers controlling with LMC

The Cartesian coordinates of Index, Middle, Thumb fingers and Wrist were tracked, which are X , Y , and Z values and then by Vector addition method the wrist to fingertip parameter was obtained, which is (K), the K value ranged from minimum value of 50 (calibrated digital value of Arduino) to maximum value of 150 (calibrated digital value of Arduino).

The changing coordinates of the moving human hand, is precisely tracked and monitored by the Leap Motion Controller and the movement is displayed on the adjoining screen. The soft robot arm recreates the same movement, done by the human hand, using it's fingers based on elastromers as demonstrated in the next section.

Final Demonstration (Video) , Conclusion & Further Implementation

Summary

The Hand Gesture Controlled Soft Robot was designed as a bilateral system, integrated together through a central communication system. The process consists of an input feed from the master side to where as in response, the slave side recreates the initial movement of the master side. The master side of this design is the Hand Gesture Controlling Mechanism, where the hand gesture is tracked and monitored with high precision and accuracy. High accuracy has been assured through tracking the position, orientation, velocity of the finger tips as well as the positioning of the wrist, through the use of a Leap Motion Controller. Consequently, a dynamic variable will change accordingly, to the algorithm we have developed using the Processing IDE. The slave side of this integrated design is the Robot Manipulator Mechanism, with the Soft Gripper. The output of the process will be performed by this section, which is connected to the Soft gripping mechanism, where the gripping mechanism will be controlled with comparison to the flex sensor feed to replicate the initial hand gesture.

Conclusion & Further Implementations

We have tested Elastromer based fingers with different pressure settings. One major problem that we have identified is connection made for cavity section to base section is not capable enough to smoothly bend the finger and bare high pressures. Therefore some tested fingers were blown up and damaged. Also in our control board we were unable to develop a pressure relief valves to enhance the safety and also could be use to control the damaging of Soft fingers. Other than that when we are running all systems together the fingertip and wrist visualization in Processing development seems to be get stuck and delayed. One of the possible solution for this problem is introducing stand alone control platform such as Raspberry Pi or Intel Galileo for this system which will be able to work without accessing in to a PC and properly synchronizing Master & Slave sides of the two systems.

Also one of the major further implementation of this project is IoT (Internet of Things) enabling in this system. With such a development this product will be commercially ready. Also we can implement this Gesture Controlling with different types of Soft Robot manipulators which will be open doorways to many new applications in medical, military and agricultural industries.

Final Test